Salvaging the Smash MVP. Five months. One cut list.

Problem

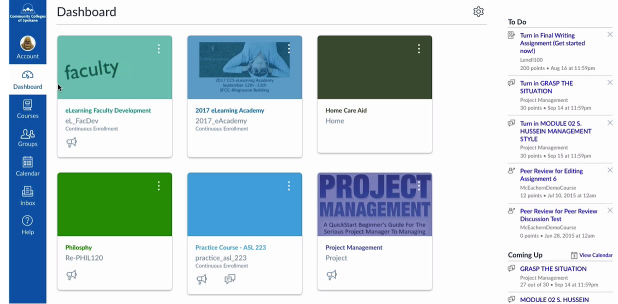

Eighteen months of offshore development. Three engineering teams. A waterfall contract written before there was a product. An MVP that beta users described as confusing. Active students in live cohorts who couldn't describe what the platform was for.

Needs

Ship in five months. Recover credibility with the founder's first three corporate prospects. Stop the dev cost bleed. Make it possible for the offshore team to finish without rework. Don't break the live cohorts mid-semester.

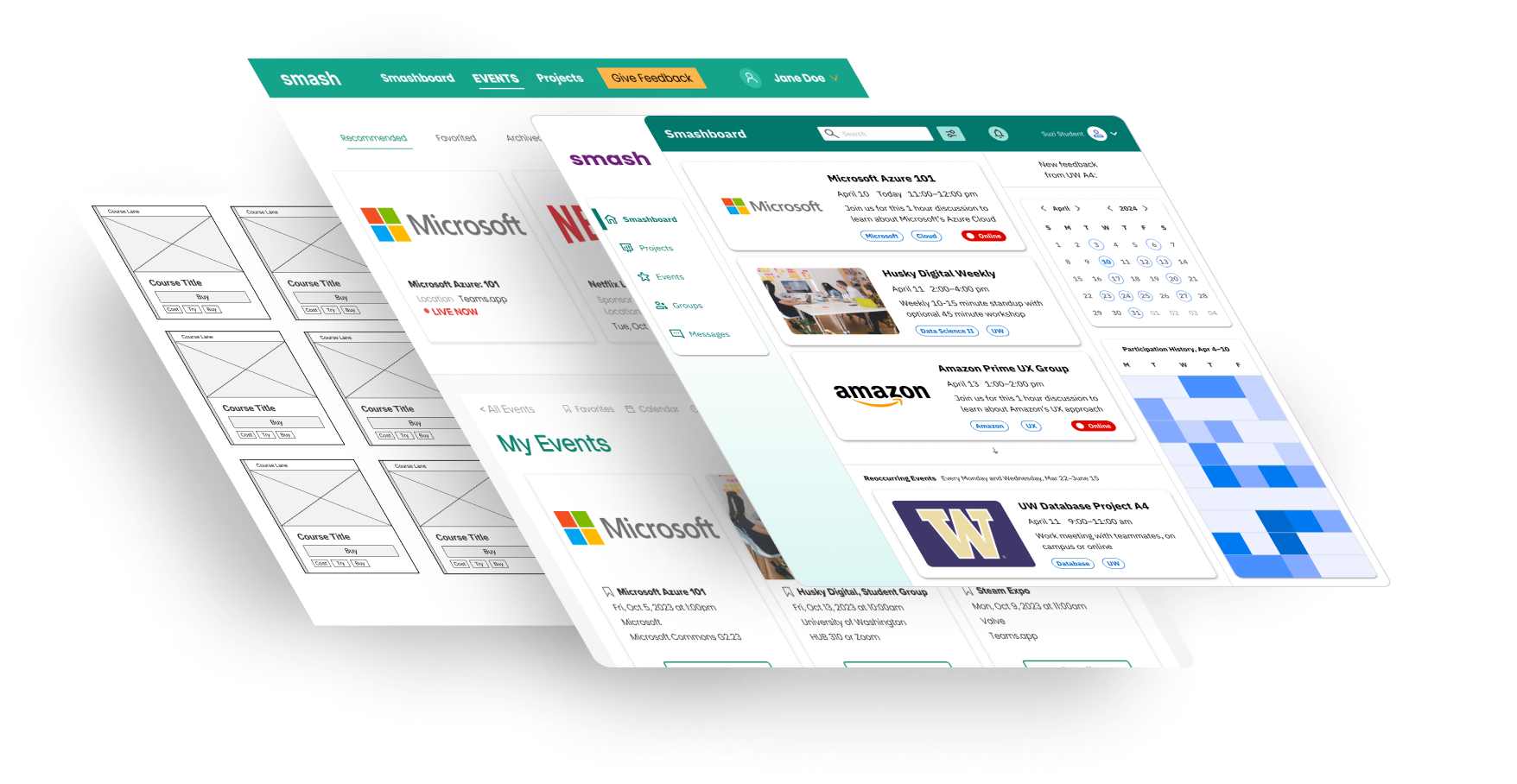

Solution

Renegotiate the contract before writing a line of design. Migrate to Open edX before designing a component. Cut three in-flight features before building any new ones. Design system before screens. Card grid before search forms.

Less product, more clearly explained.

Competitive audit

11 platforms · 3 axes| Platform | Learning & upskilling | Real-world projects | Hiring enablement | Notes |

|---|---|---|---|---|

| Smash | Integrated coaching & progress tracking | Live industry projects with companies | Direct pipelines to hiring partners | Full-stack integration |

| Riipen | Depends on partner institutions | Course-based company projects | Indirect; visibility, not direct matching | Needs educator buy-in |

| Forage | Structured virtual experiences | Simulated employer-branded tasks | Brand awareness, not placement | Asynchronous + self-paced |

| Acadium | Apprenticeship training | Micro-internships with small businesses | Focused on freelance & early-career roles | Niche, limited in scale |

| SV Academy | Full-time training cohorts | Internal or simulated projects | Placement into partner companies | Specific to sales roles |

| Handshake | Light skill development | Few real-world experiences | Job and internship board | Widespread adoption, weak on depth |

| Piazza Careers | Light engagement, mostly forum-based | Minimal project integration | Employer discovery & outreach | Recruitment layer |

| Coursera / edX | Robust credentialed learning | Occasional capstones or simulations | No direct job placement | Strong academic partnerships |

| NovoEd | Project-based learning | Mostly internal learning simulations | No external hiring connections | Used in corporate settings |

| Turing / Karat | No learning component | Simulated coding challenges | Job matching for vetted candidates | Focused on engineering |

| Passive skill building | No structured projects | Massive hiring network | Broad but shallow integration |

No competitor was doing all three — meaningful learning, real industry projects, and direct hiring pipelines — in a single integrated platform. That gap became the foundation for every scope decision I made.

Renegotiate the SOW before designing anything.

Replaced the open-ended waterfall arrangement with sprint-based delivery and explicit acceptance criteria. The design problems were downstream of process problems. Those had to move first.

Two months before a pixel moved. Hard sell to a founder watching runway burn. But every design decision I made later was only possible because the contract allowed it.

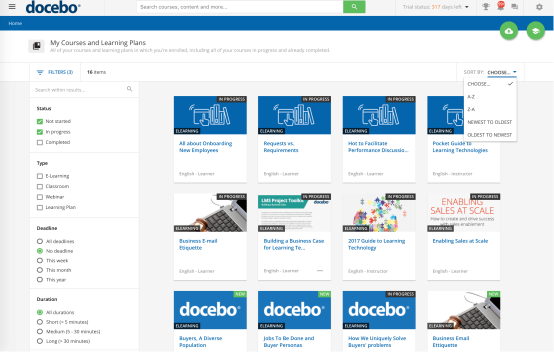

Migrate to Open edX mid-redesign instead of finishing on the custom platform.

I was partway through a first full redesign when the case for migrating became undeniable. Open edX: open-source, MIT license, comprehensive LMS features already built. The choice freed engineering hours for features that actually differentiated the product and saved $220K+ in build costs.

Constrained the visual design to Open edX's component model. The first redesign's visual direction had to be adapted, not transplanted. The final aesthetic was also shaped by an in-progress Microsoft Learning partnership. We gave two product demos for their team, came close to landing the contract, and never heard why we didn't. Some constraints aren't design decisions at all. Worth every tradeoff. Should have pushed for this move three months earlier.

Remove the personalized eligibility metric before it shipped.

User research made the call. "What happens if it's wrong?" Students didn't trust a number they couldn't interrogate making decisions about their access to real opportunities. I replaced it with explicit, transparent eligibility criteria.

Lost the product's marquee differentiator. Gained trust. An eligibility system that's legible and fair matters more than one that's impressive but opaque.

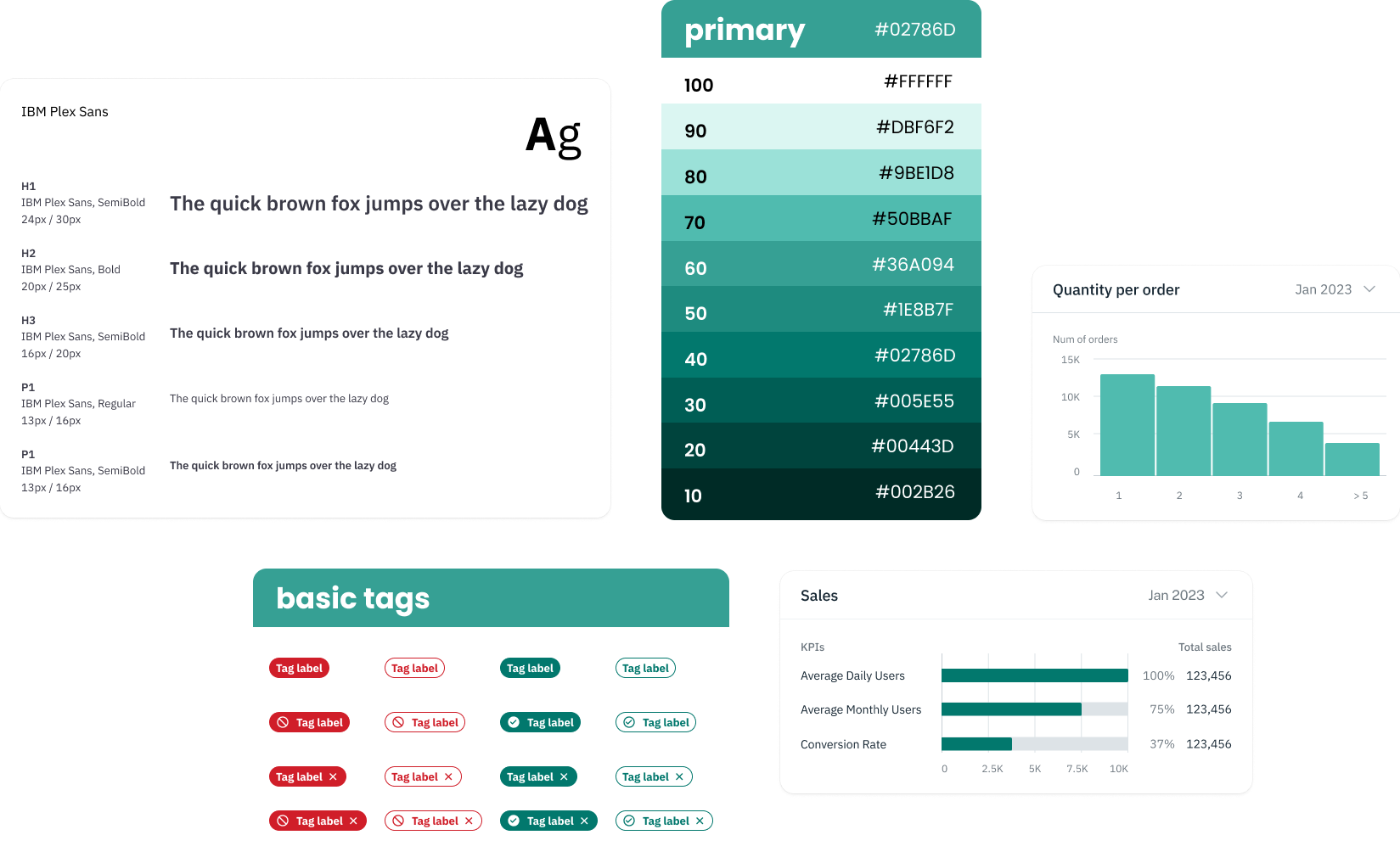

Built on Ant Design before redesigning any product screens.

Comprehensive component library, strong data display patterns, thorough documentation that reduced ambiguity in remote handoffs. Customized color, type, and card patterns on top. Adapted again for Open edX's native React components after the migration.

Visually constrained to Ant's model early on. A more bespoke system would have been more impressive, and would not have shipped in the window we had.

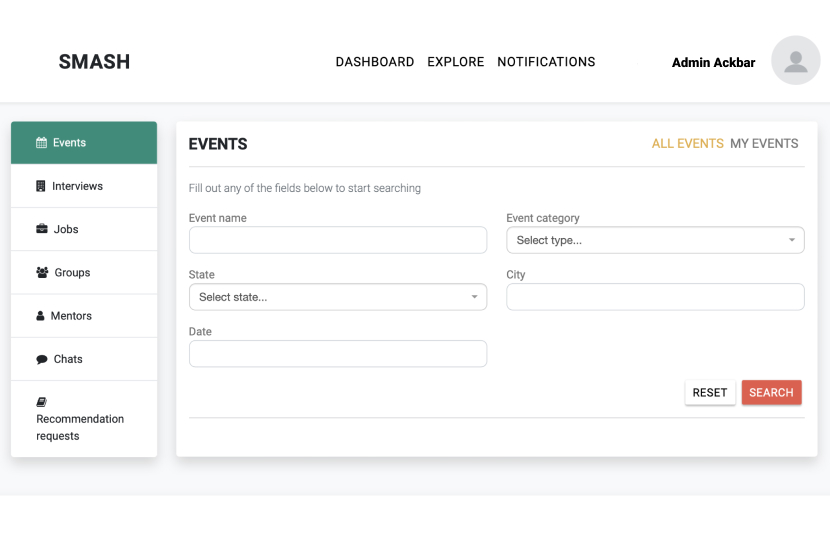

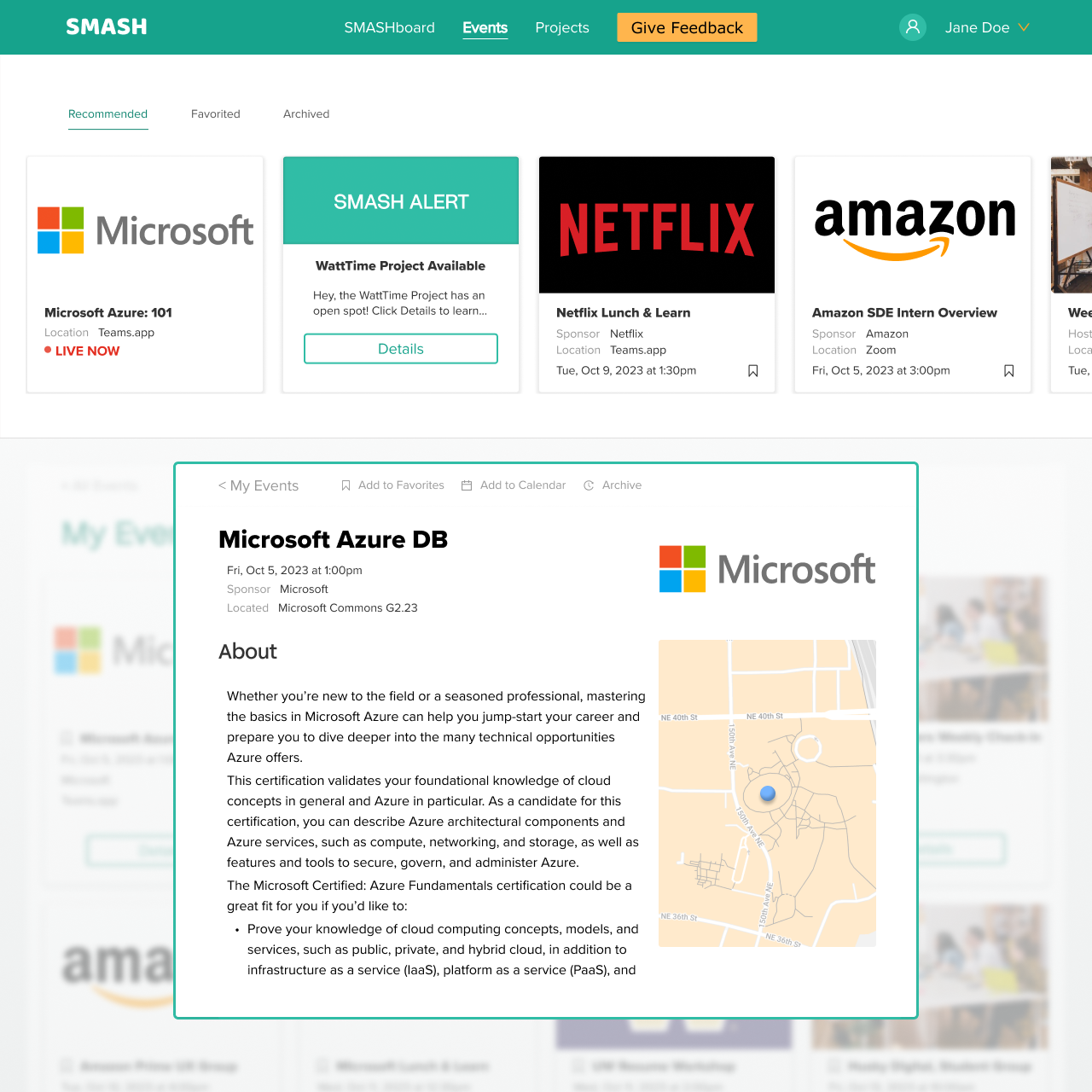

Replaced form-based event search with a browsable card grid and popup detail overlay.

Students didn't know what kinds of events existed, so they didn't know what to search for. The original page led with a blank form. The first redesign replaced it with all eligible events displayed upfront; clicking a card opens a detail overlay with a blurred background, dismissible by clicking outside — without losing grid context. The Open edX version carried this interaction pattern forward within platform constraints.

More surface area to design and maintain. Required building a real filtering system to replace the old search form. Worth it: the core flow went from opaque to instantly legible, and the interaction pattern held across two platform generations.

Filtered in-person events to within 100 miles of the student's location.

Goal: reduce noise. Show students events they could actually attend, not everything that existed. Remote-only events show no map; in-person events show a venue thumbnail.

May have hidden opportunities some students would have wanted regardless of distance. A judgment call that deserved more data than I had at the time.

If I'd do it again

Push for the Open edX migration earlier. The savings were so significant that an earlier move could have materially changed the company's trajectory. I understood the value; I didn't advocate hard enough, fast enough. The first redesign work wasn't wasted, but it could have been applied to the right platform from day one.

Make the cut list a public artifact from day one. Half the founder's reluctance to cut features came from never having seen them stack-ranked next to each other. Once the doc existed, the conversation took two days. Without it, it had been stuck for months.

Start usability sessions in week one, not week three. The screens I redesigned in weeks 1–3 were the ones I redesigned again in weeks 8–10. I hadn't yet watched anyone use them. Even two sessions earlier would have changed my starting assumptions.